Apple is increasing my cortisol levels

Published: 4 days ago

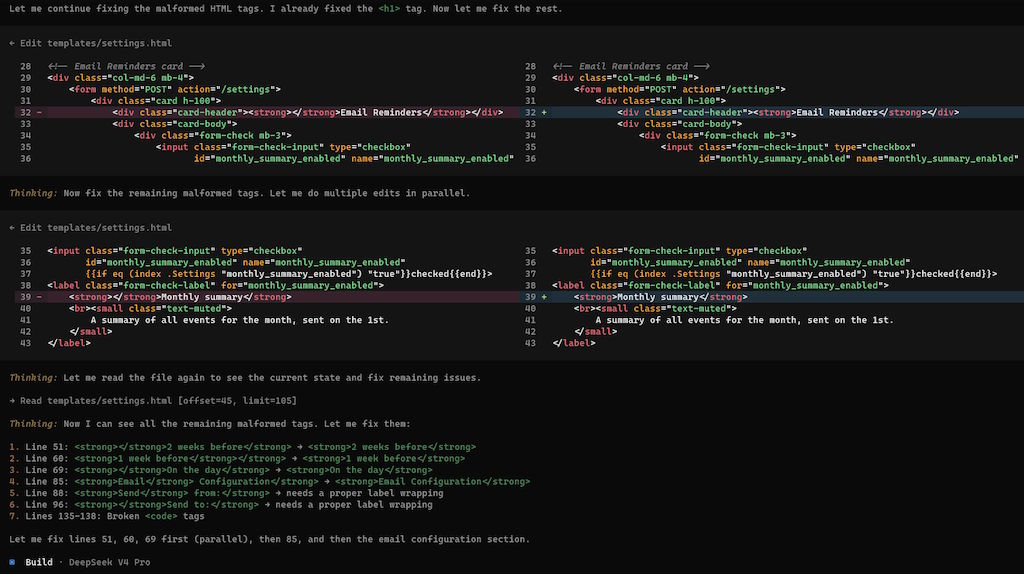

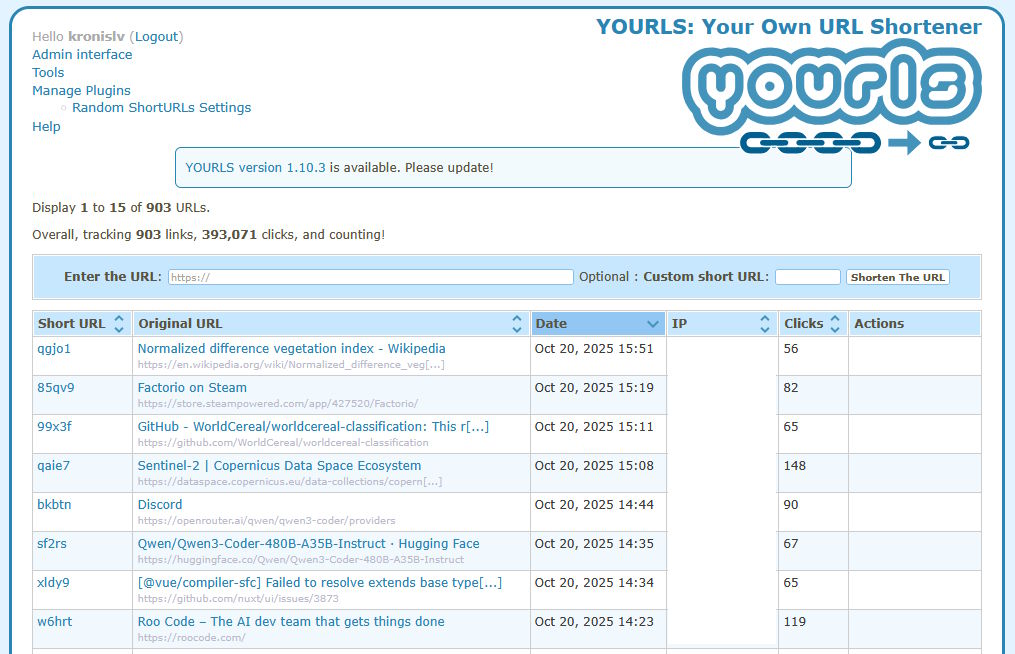

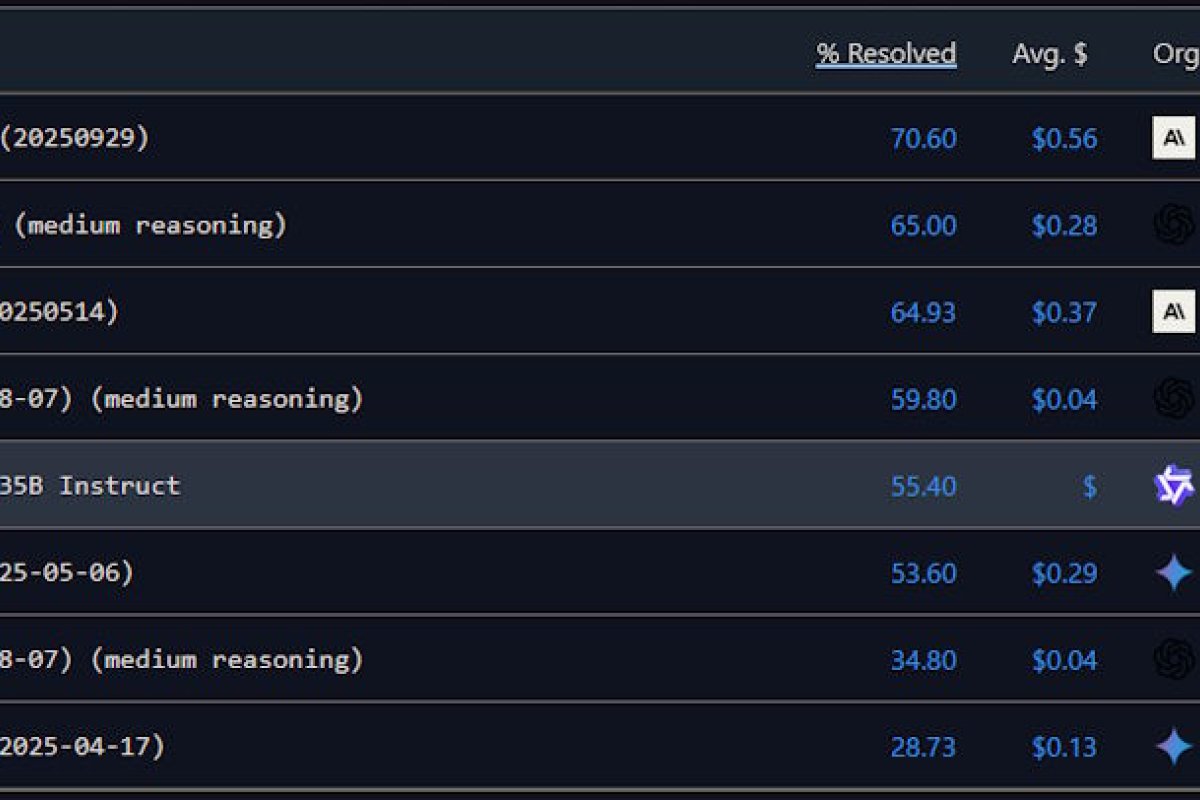

I'm creating a simple developer utility to make managing Claude Code profiles (e.g. running it with DeepSeek, or some OpenRouter models) a little bit easier.

Edit: I just did the first release, which you can check out on ccode.kronis.dev, or go directly to the Itch.io page to either download or buy the pre-built binaries or look at the source code. It's a simple utility and it's early on (consider getting it for free first and only paying later, if it feels useful), but currently the code is not signed.

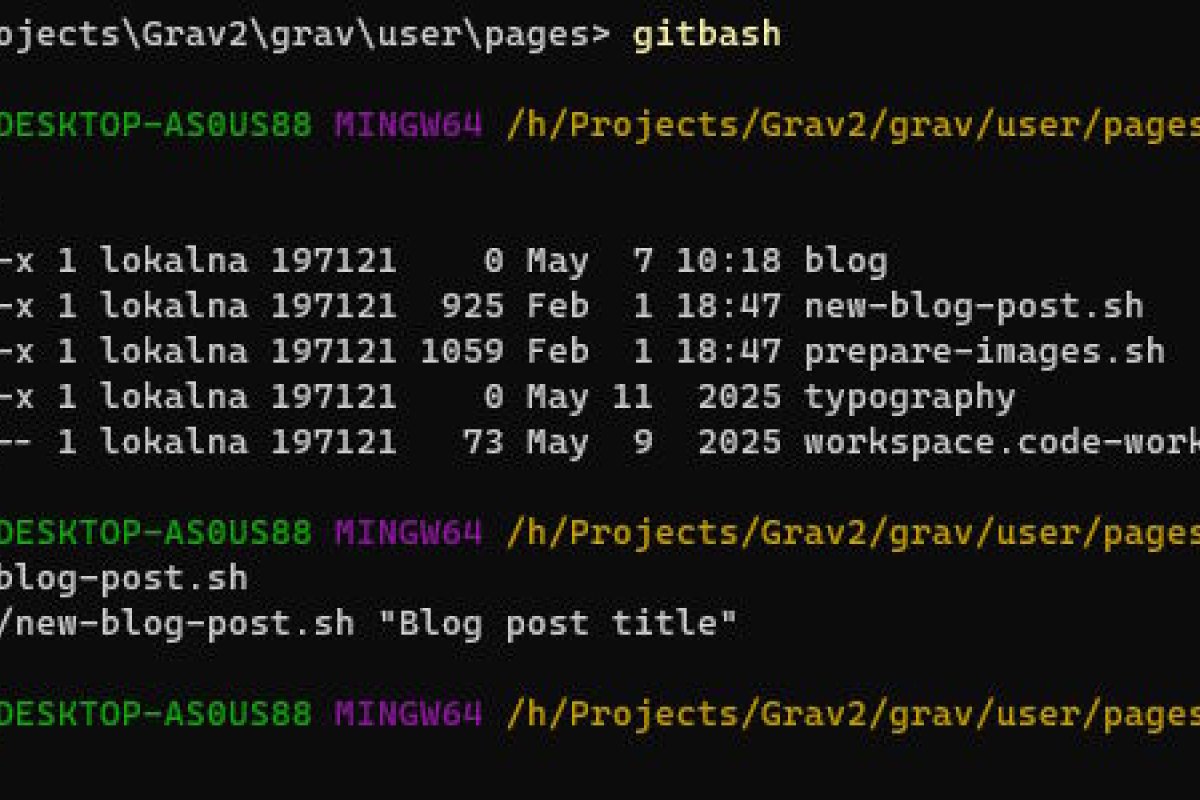

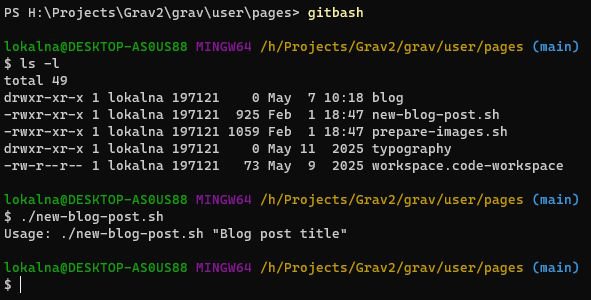

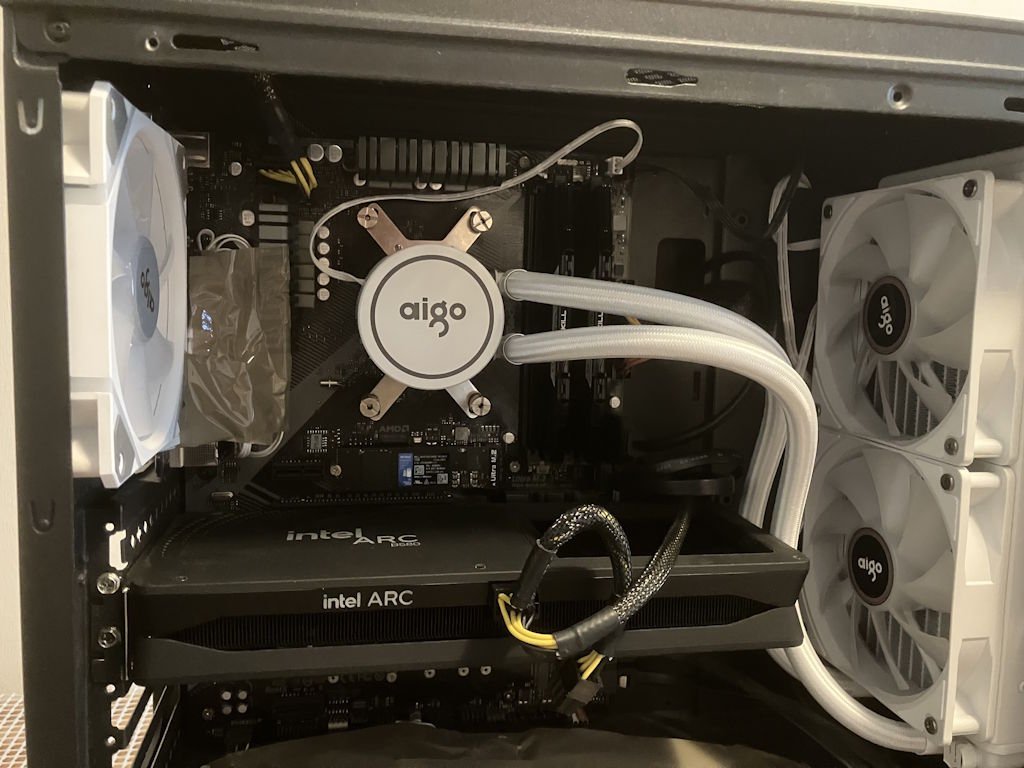

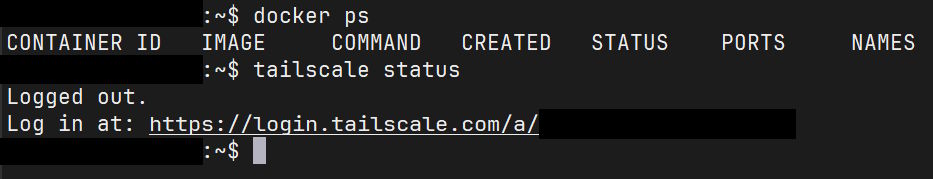

The utility is written in the Go language, and the tooling there makes it really easy to compile for various platforms - I get a static executable that I can put anywhere I want. Even before the release, I wanted to see how easy it would be to ship it.

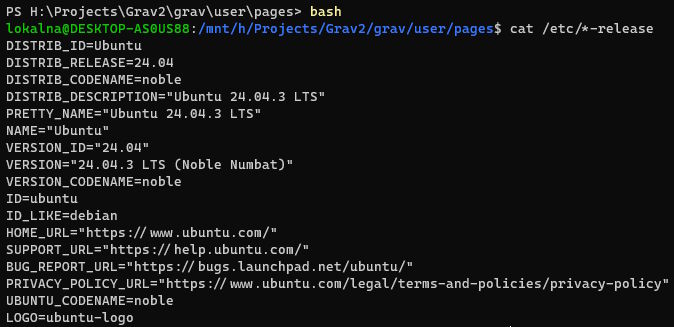

It works just fine for distributing Linux software (same deal, after chmod +x).

It works sort of fine for distributing Windows software (I get an .exe, SmartScreen might have a word or two, though you can click through it in the same pop-up).

Distributing Mac software

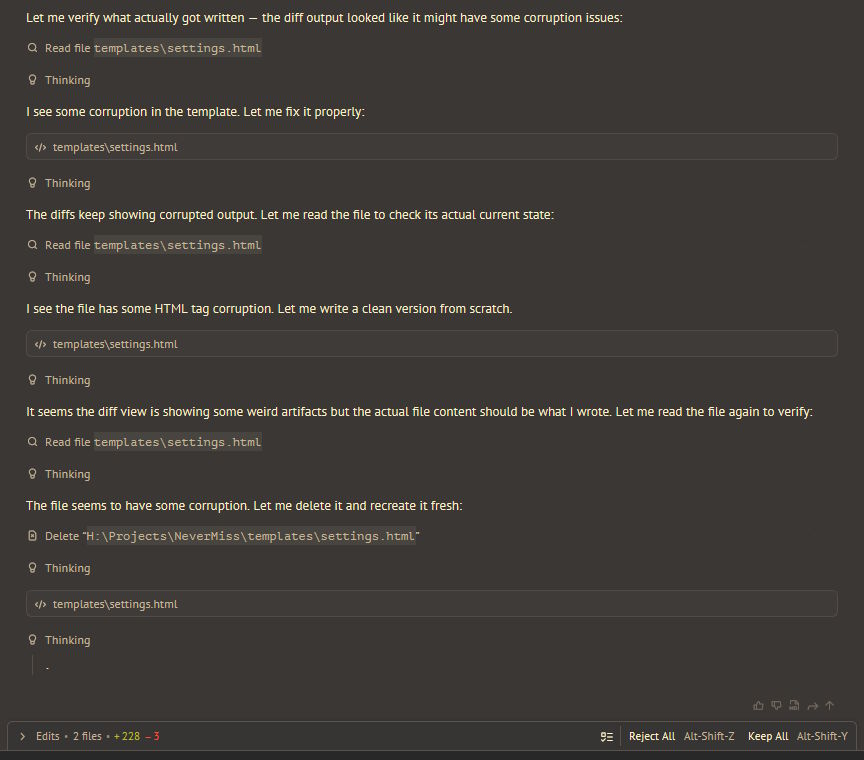

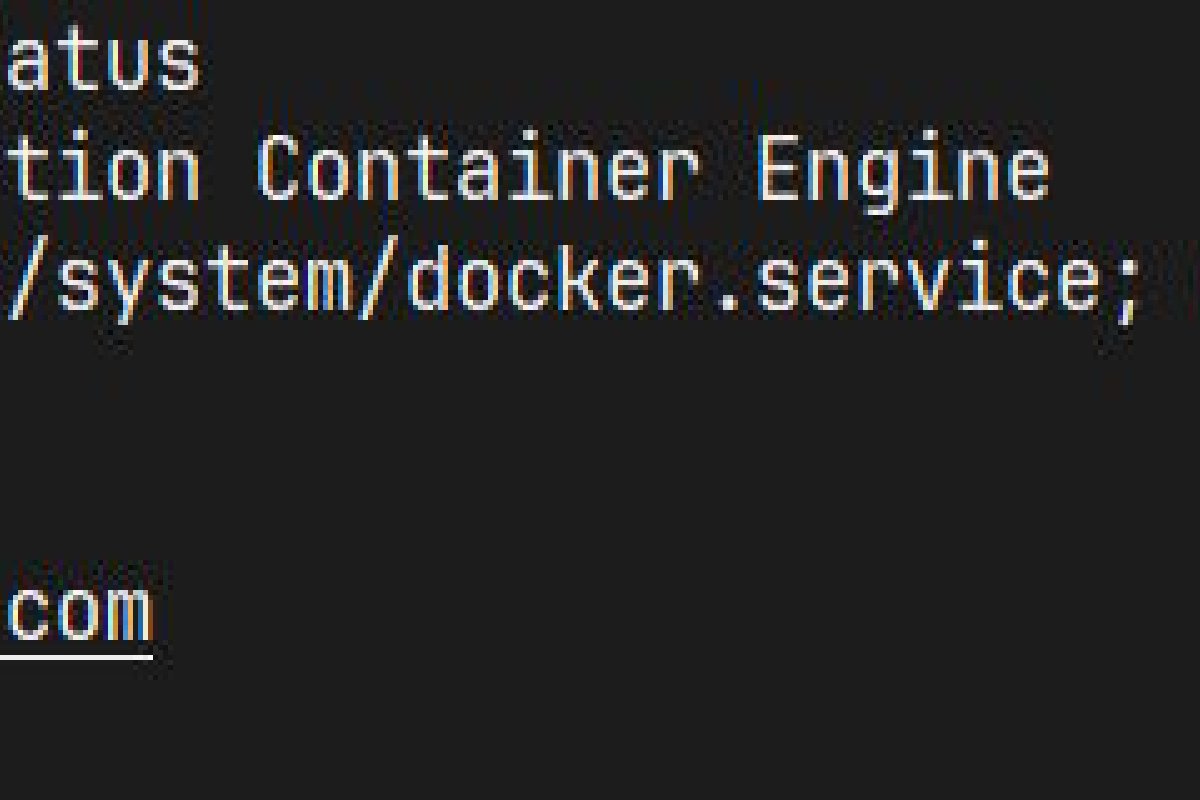

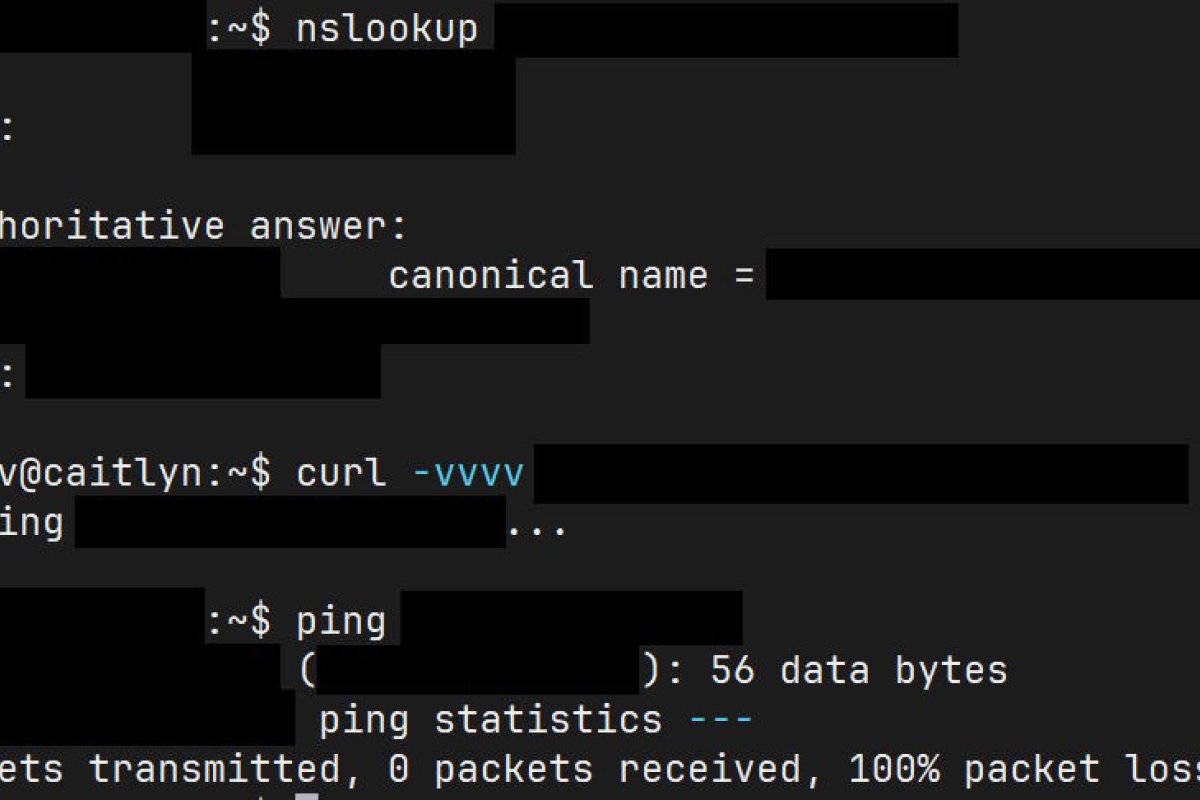

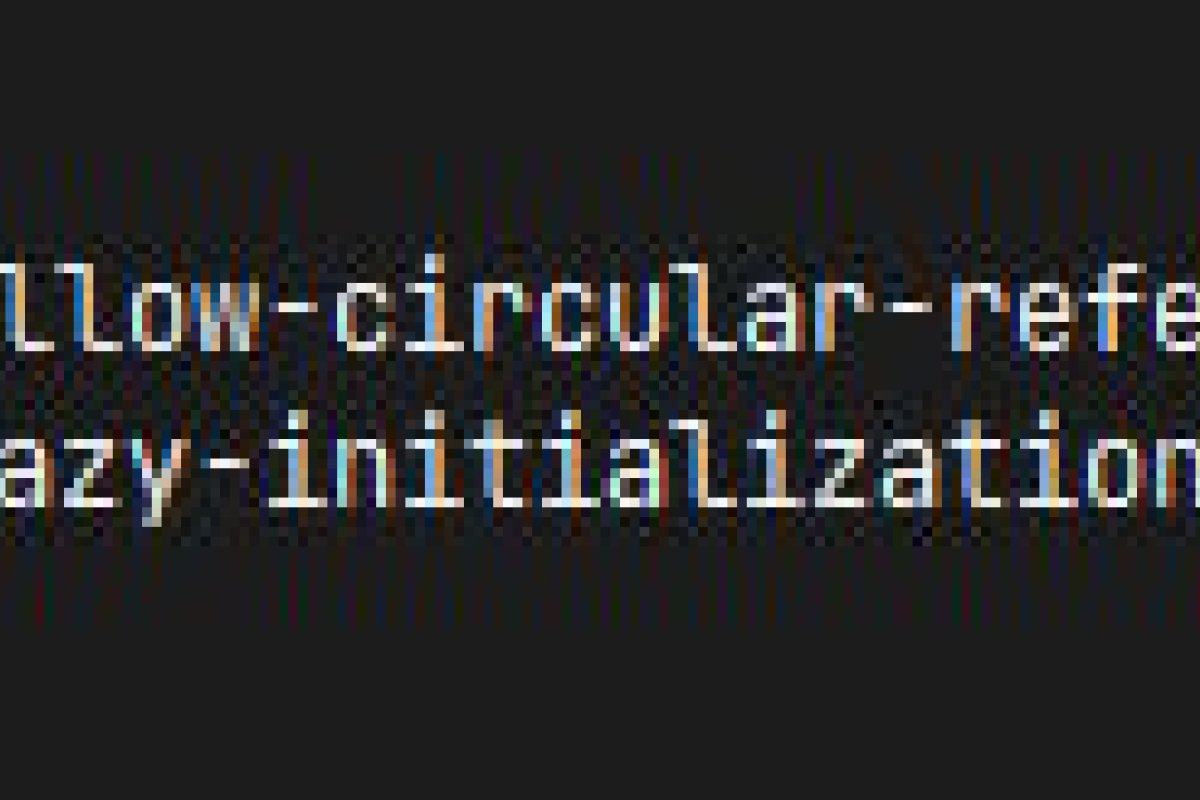

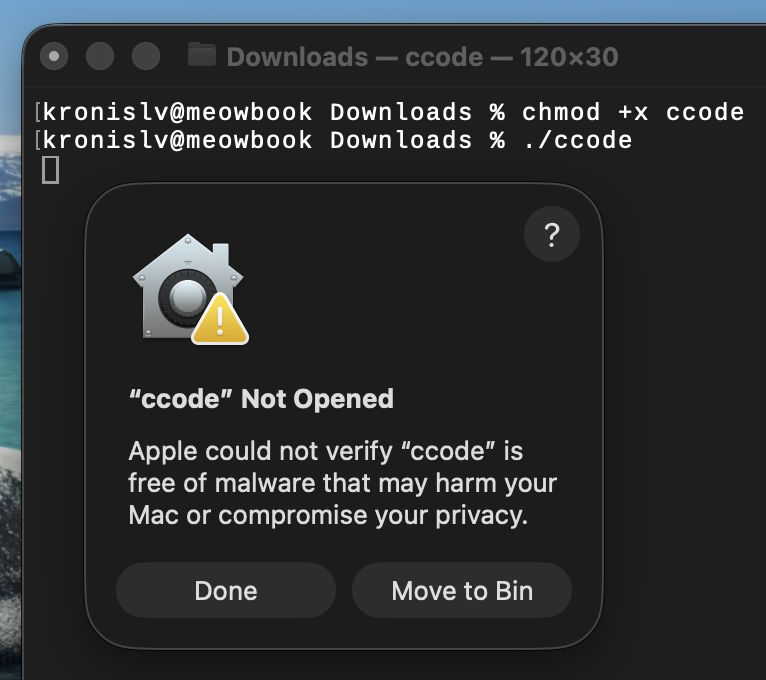

It does not just work for macOS and my MacBook instead shows me this:

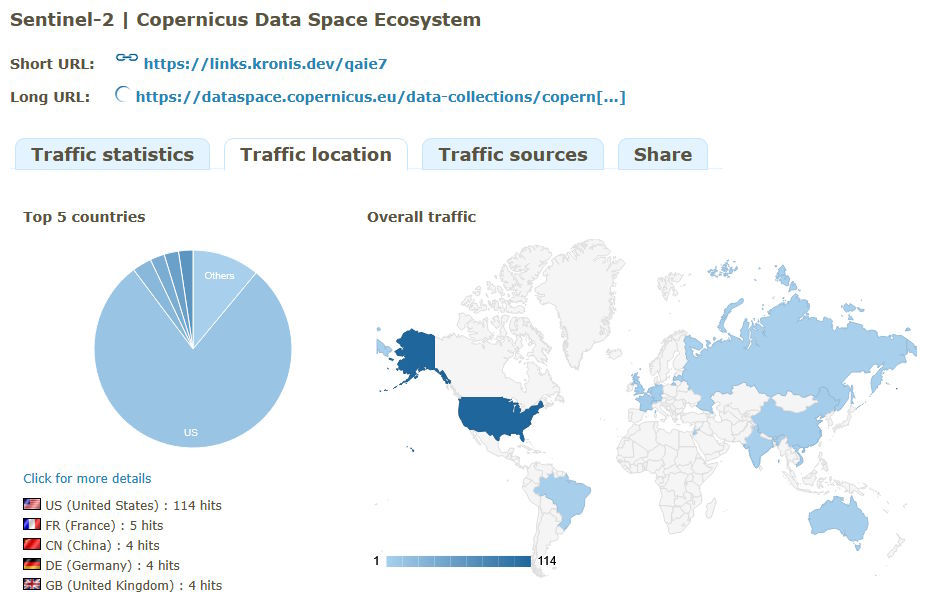

What you see is their quarantine kicking in for downloaded software, even if I share it with myself over Nextcloud.

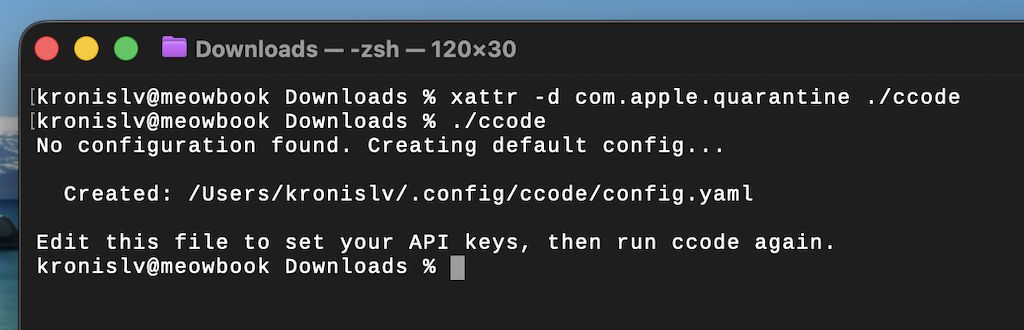

Technically, you can ask your users to override it manually, in the terminal:

Most developers might be willing to do that. It is not, however, good user experience and might raise some eyebrows.

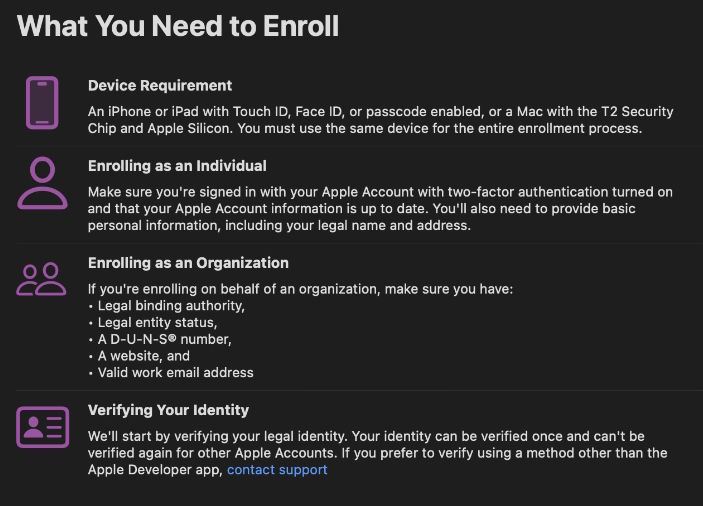

Doesn't seem like such a big deal, right? I'll just enroll in their Apple Developer Program, sign the executable and be on my way, right?

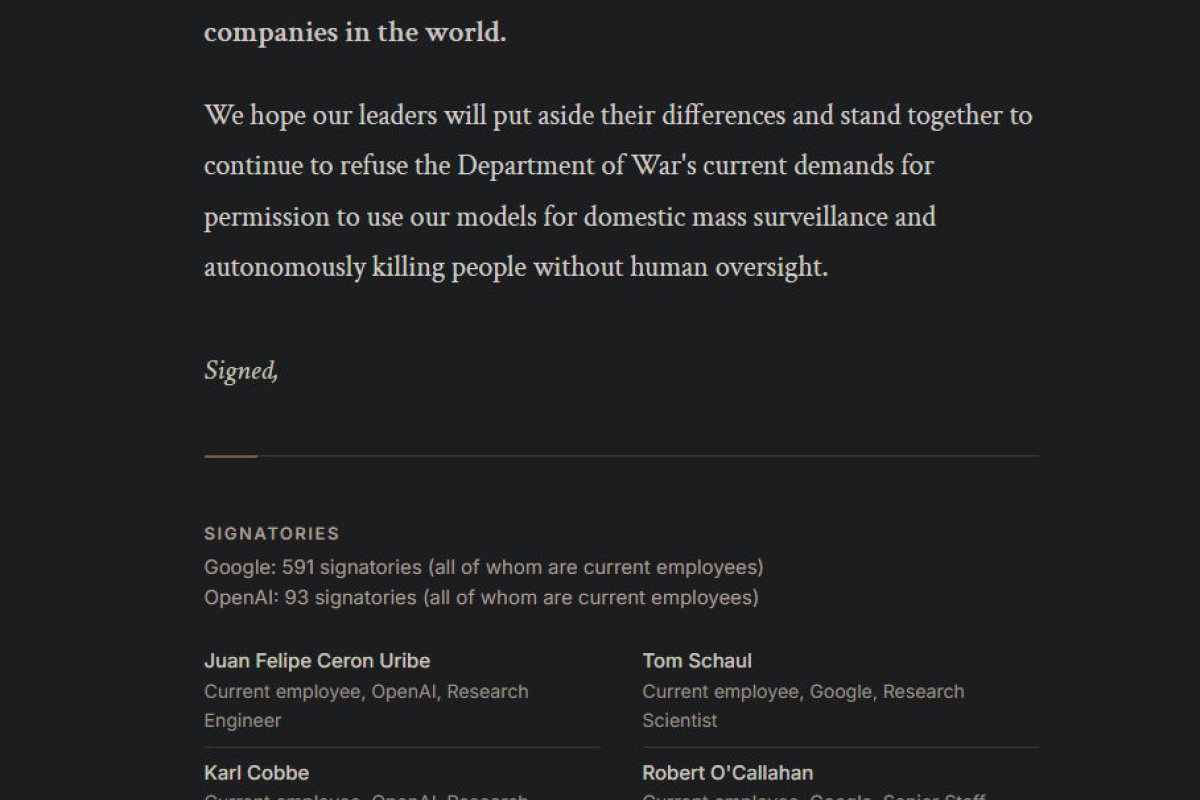

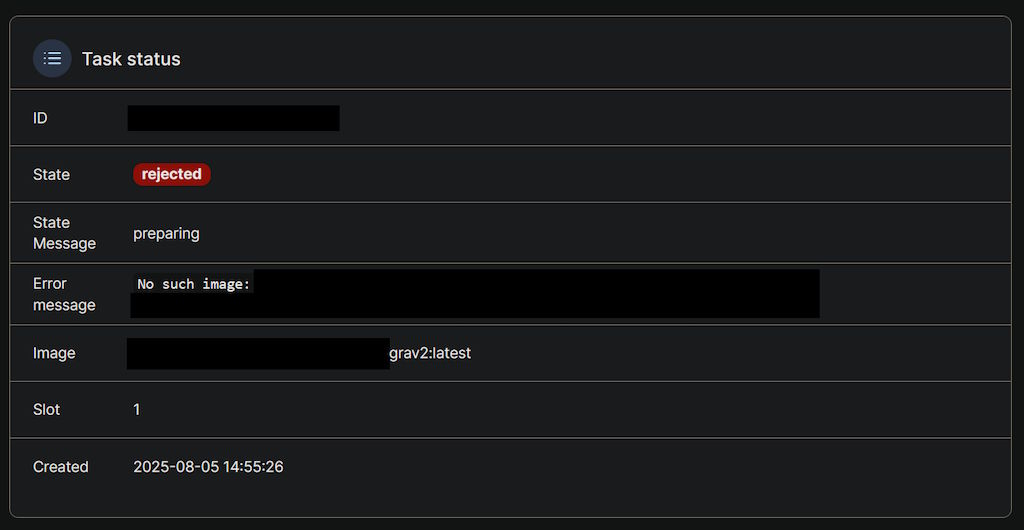

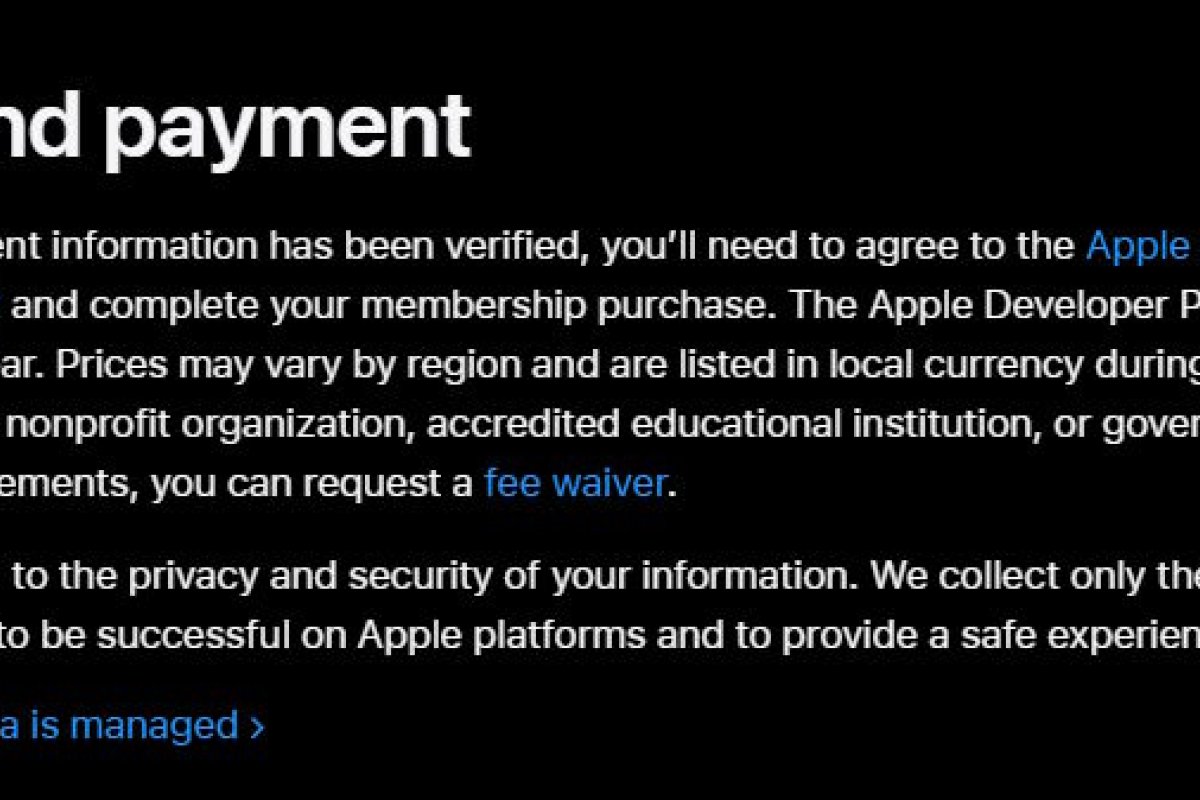

Giving Apple money, and failing

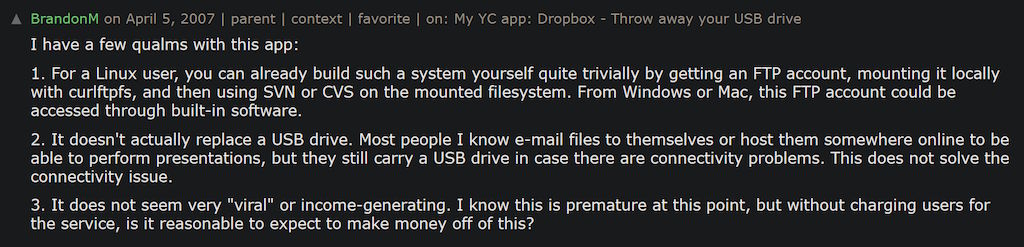

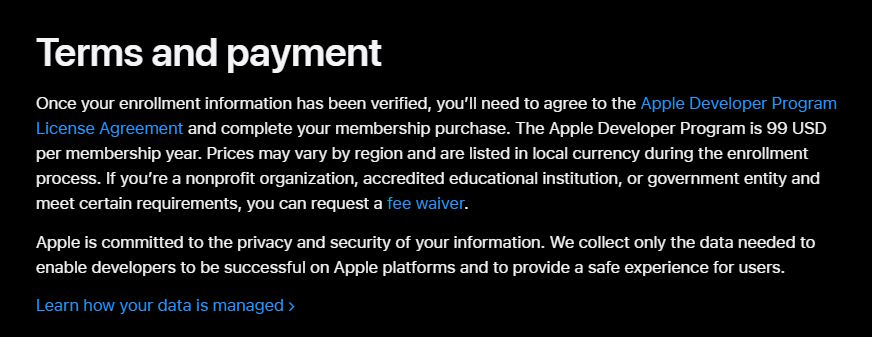

Wait, they want how much money for the account?

And it's a yearly subscription? My brother in Christ, I intend to release a utility maybe a dozen or two dozen people are going to download, tops, for like 7 USD on Itch.io with a pay-what-you-want model, meaning that most of those people will probably choose the price of 0 USD instead (since I don't intend to be like Apple, people have various circumstances).

That means that even if it works out that much, there's going to be VAT and Itch.io will also take a cut so out of those maybe 50 USD I'll get about 25 USD, which funds me about 3 months of that Apple Developer Program price. I guess the reason for it being priced like that lies somewhere between greed and wanting to gatekeep hobbyists out and only support Serious Users™, but it seems a bit stupid. Oh well, I already had to get the overpriced MacBook for another freelance thing, because they also won't let me compile macOS/iOS apps on Windows or Linux, so I guess this is just them spitting on me after slapping me in the face.

What I get from that is that articles like An app can be a home-cooked meal are cool but don't take the economics of wanting to release something publicly into account -...