Digest: r/selfhosted: May 01 - May 08, 2026

Published: 5 days ago | Author: System

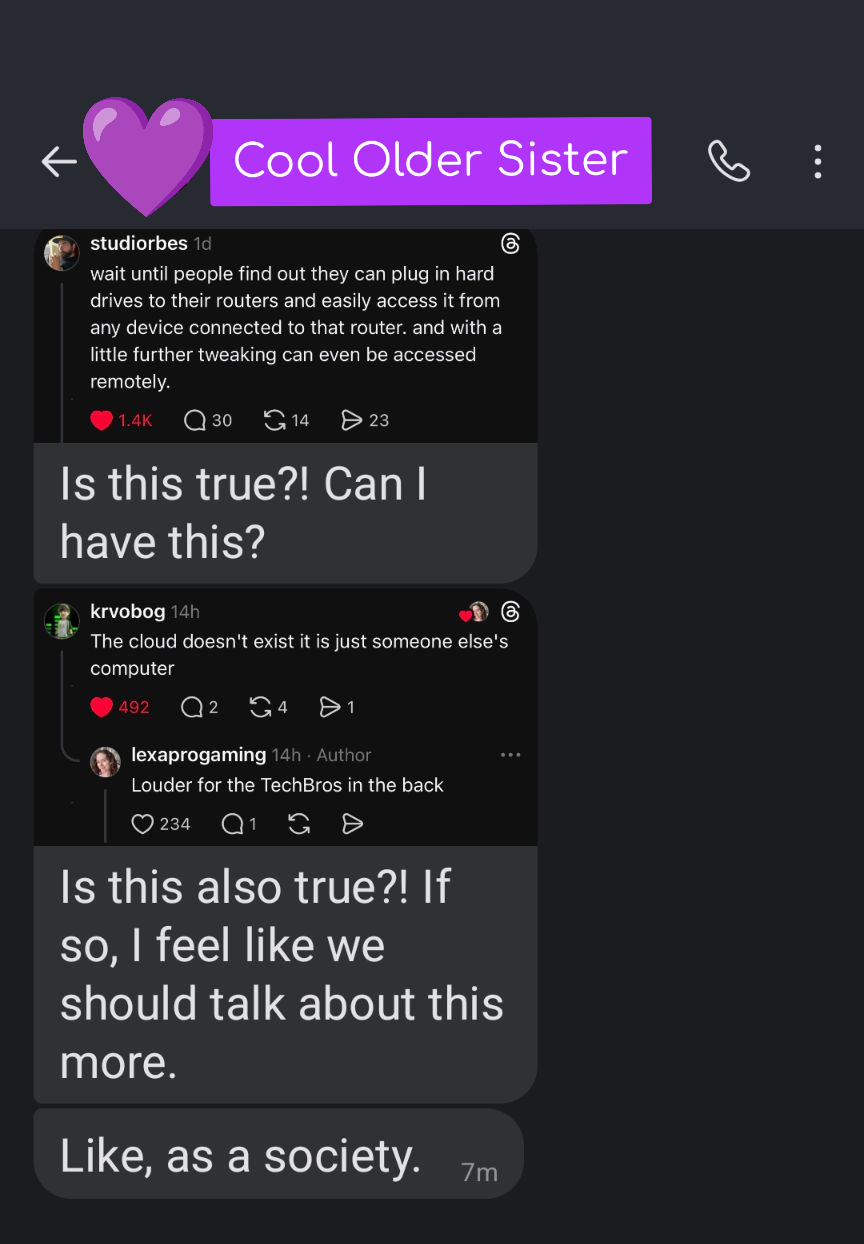

She may come to regret asking.

Buckle up sis, that's just the top 10% of the iceberg.

⬆️ 997 points | 💬 65 comments

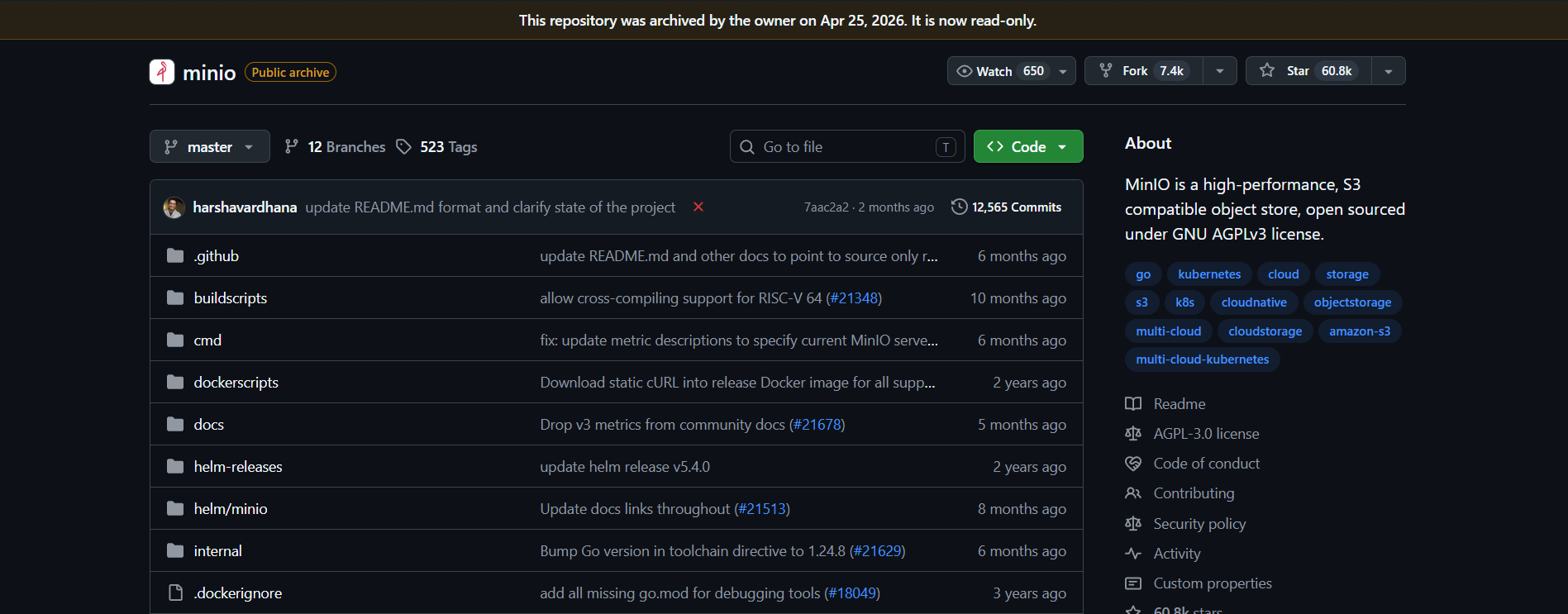

Patch your servers, peeps, new Linux kernel vulnerability just dropped

CopyFail just dropped, it's a new Linux kernel vulnerability that gives attackers root privileges. https://arstechnica.com/security/2026/04/as-the-most-severe-linux-threat-in-years-surfaces-the-world-scrambles/

Debian has an updated kernel, Proxmox too. Looks like Raspberry Pi hasn't released an updated version yet.

⬆️ 366 points | 💬 162 comments

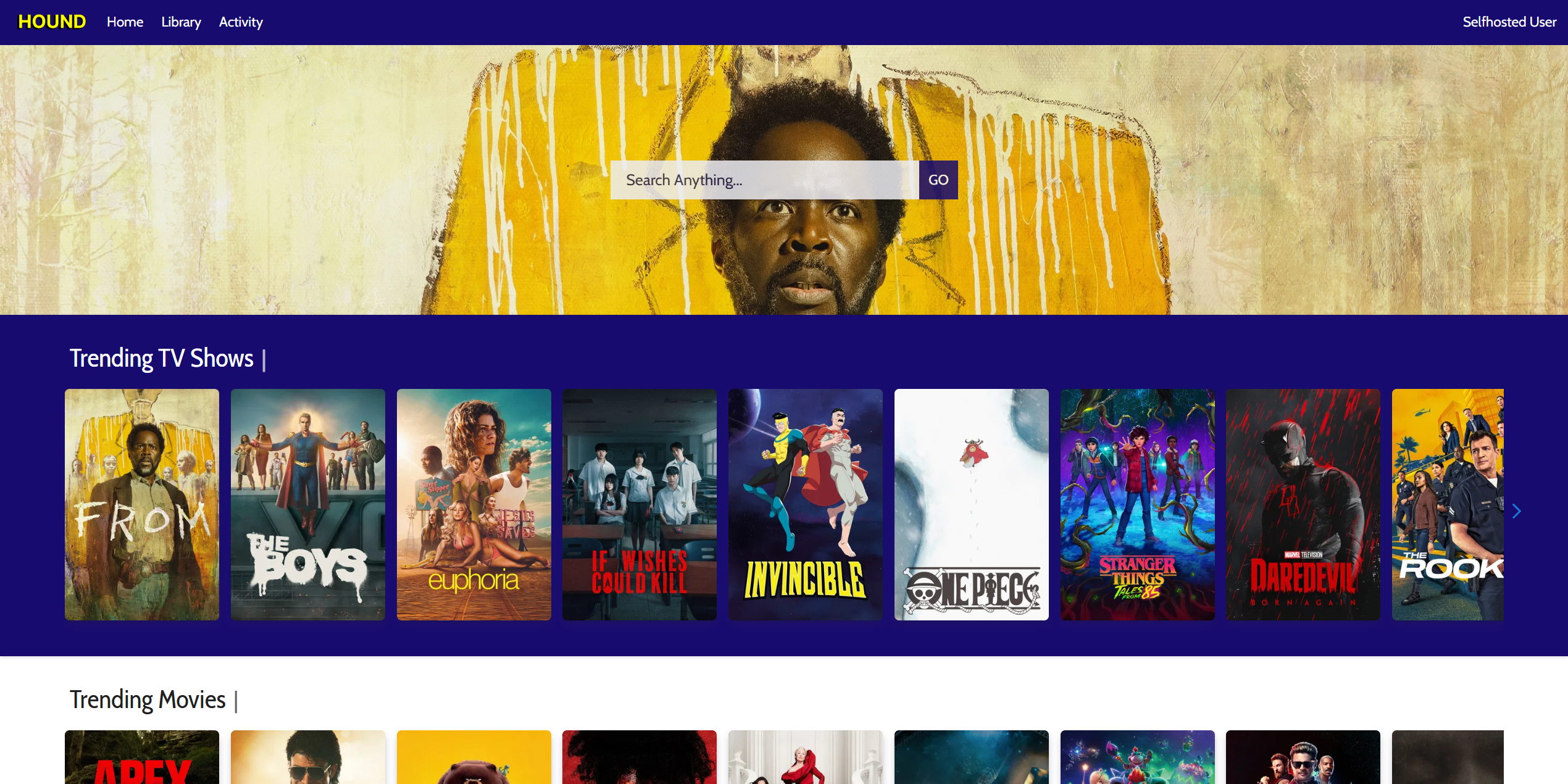

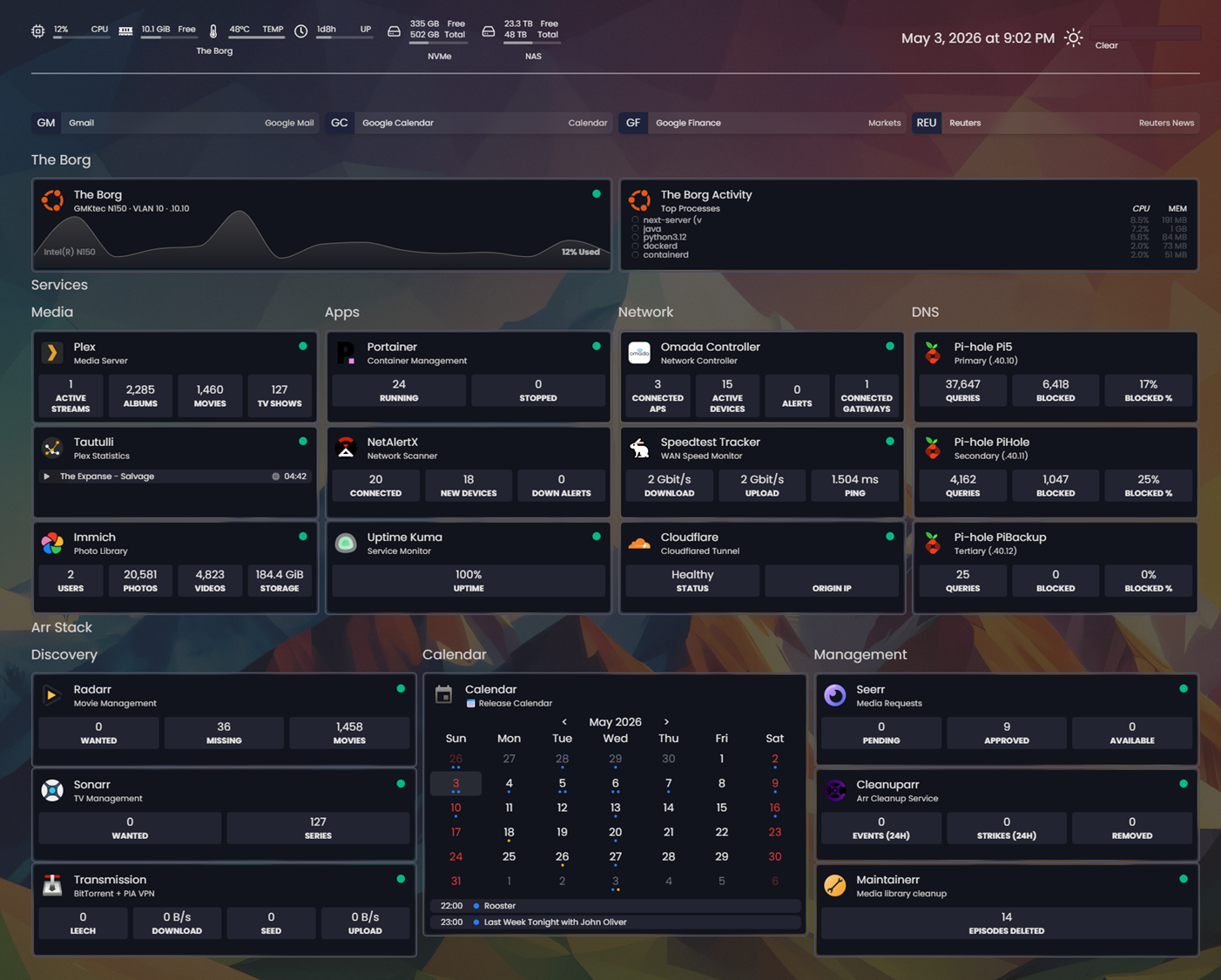

A homepage dashboard I'm finally happy with.

⬆️ 399 points | 💬 42 comments

Vaultwarden 1.36.0 patches vulnerabilities

https://github.com/dani-garcia/vaultwarden/releases/tag/1.36.0

Security fixes

This release contains security fixes for the following advisories. We strongly advice to update as soon as possible.

SSO Login CSRF - GHSA-pfp2-jhgq-6hg5, GHSA-w6h6-8r66-hcv7

User/Organization Enumeration - GHSA-hxqh-ff5p-wfr3

SSO existing-user binding - GHSA-j4j8-gpvj-7fqr

GHSA-6x5c-84vm-5j56

SSRF via Icon Endpoint - GHSA-72vh-x5jq-m82g

Some crate's updated and other minor security enhancements

These are private for now, pending CVE assignment.

https://github.com/dani-garcia/vaultwarden/releases/tag/1.36.0

⬆️ 254 points | 💬 14 comments

3-2-1 rule , how are you all doing it without breaking bank?

So my nas is getting big now slowly with around 8tb of data.

I run it on raid 1, but I wonder in the worst case scenario, I wanted to also have a off site backup. But obviously 8tb + on cloud is going to be expensive no?

How are you guys storing your offline backup? And where do you guys store it?

⬆️ 264 points | 💬 196 comments

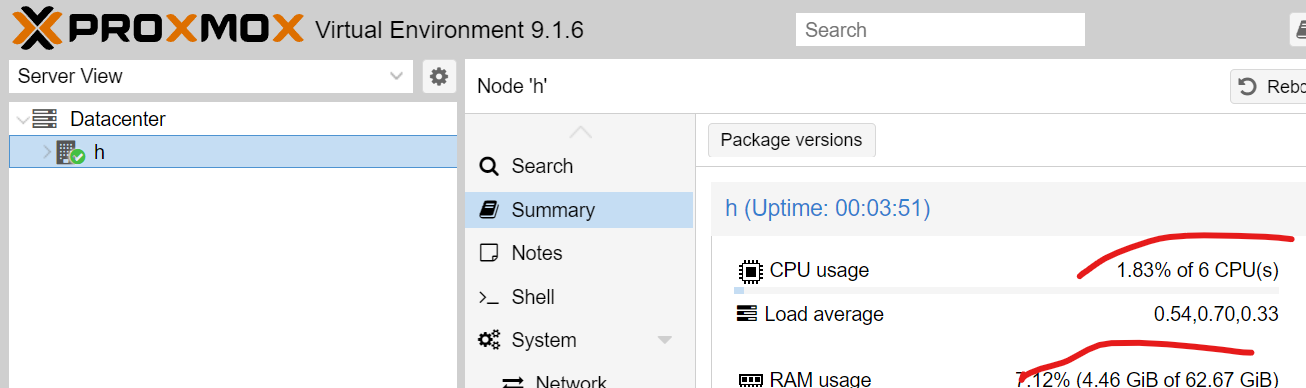

PSA for anyone not using LXCs on Proxmox

The Point: Holy shit LXCs are so cool and felt like black magic getting "free" RAM back. If you're newer, like me, and have just been using VMs instead of LXCs, you should look at changing that.

I started my server back in November knowing absolutely nothing about using Linux, using CLI, or Docker. At the same time, I also went in raw, jumping straight into Proxmox on three nodes. As a result, I ended up using a lot of the Proxmox VE Helper Scripts for initial setup and have since gone back and learned how to do a lot of things myself. One of the hugely inefficient decisions I made at the time was to use a VM for Docker instead of an LXC.

For context, two of my nodes are running an i3-5005U and 8gb of soldered DDR3 RAM. One of those machines was exclusively running a VM to run Docker containers largely centered around downloads. On average, I was hitting ~30-50% CPU on the PVE host and ~7GB RAM usage.

Switching to an LXC has brought that down to 10-25% CPU and ~2-2.5GB RAM usage. A machine that felt like it was at its limit suddenly gained immense amounts of headroom.

Just wanted to put this out there for anyone procrastinating switching some VMs to LXCs. In my case, it was worth the relatively low amount of effort to free up such a significant amount of resources.

⬆️ 251 points | 💬 83 comments

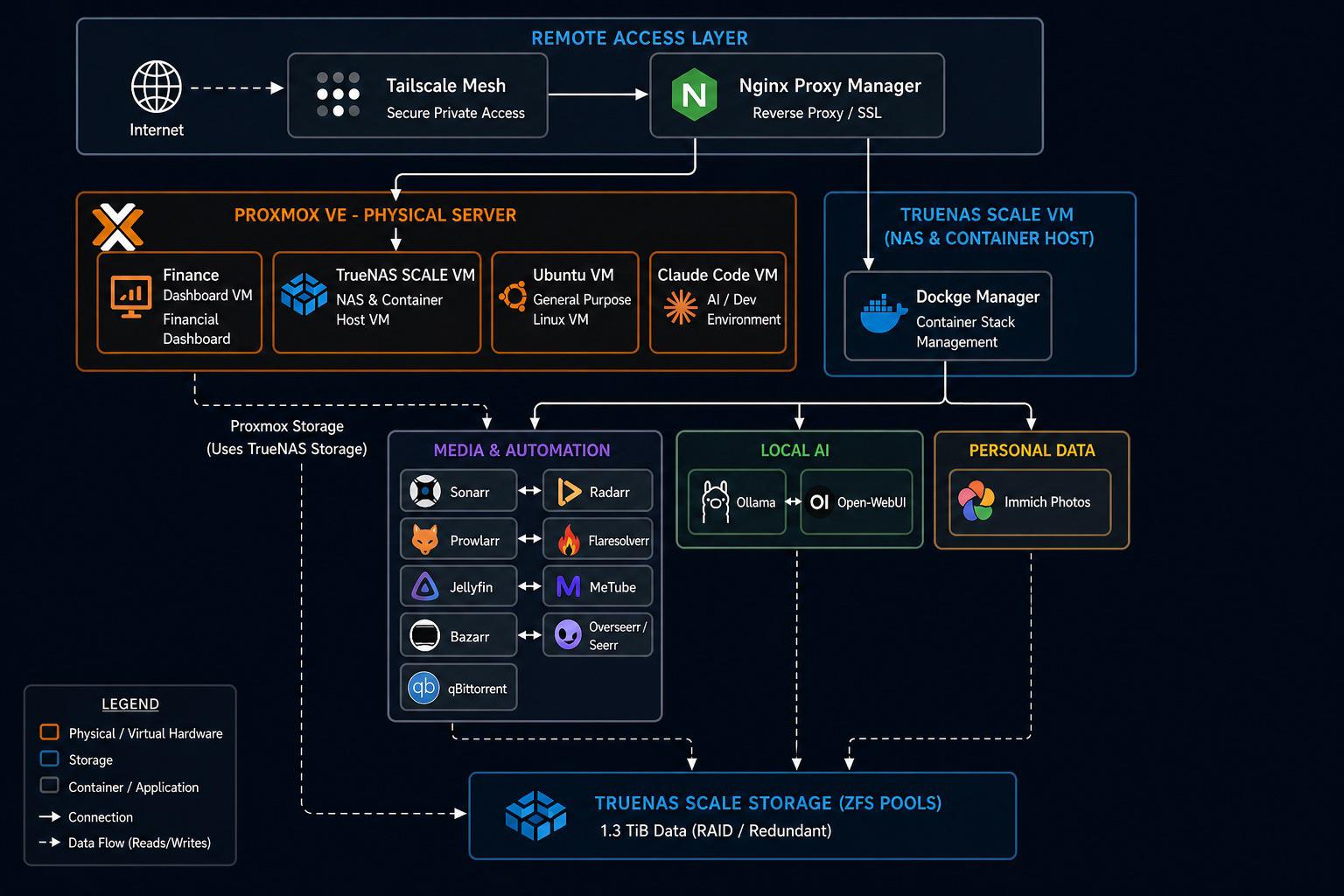

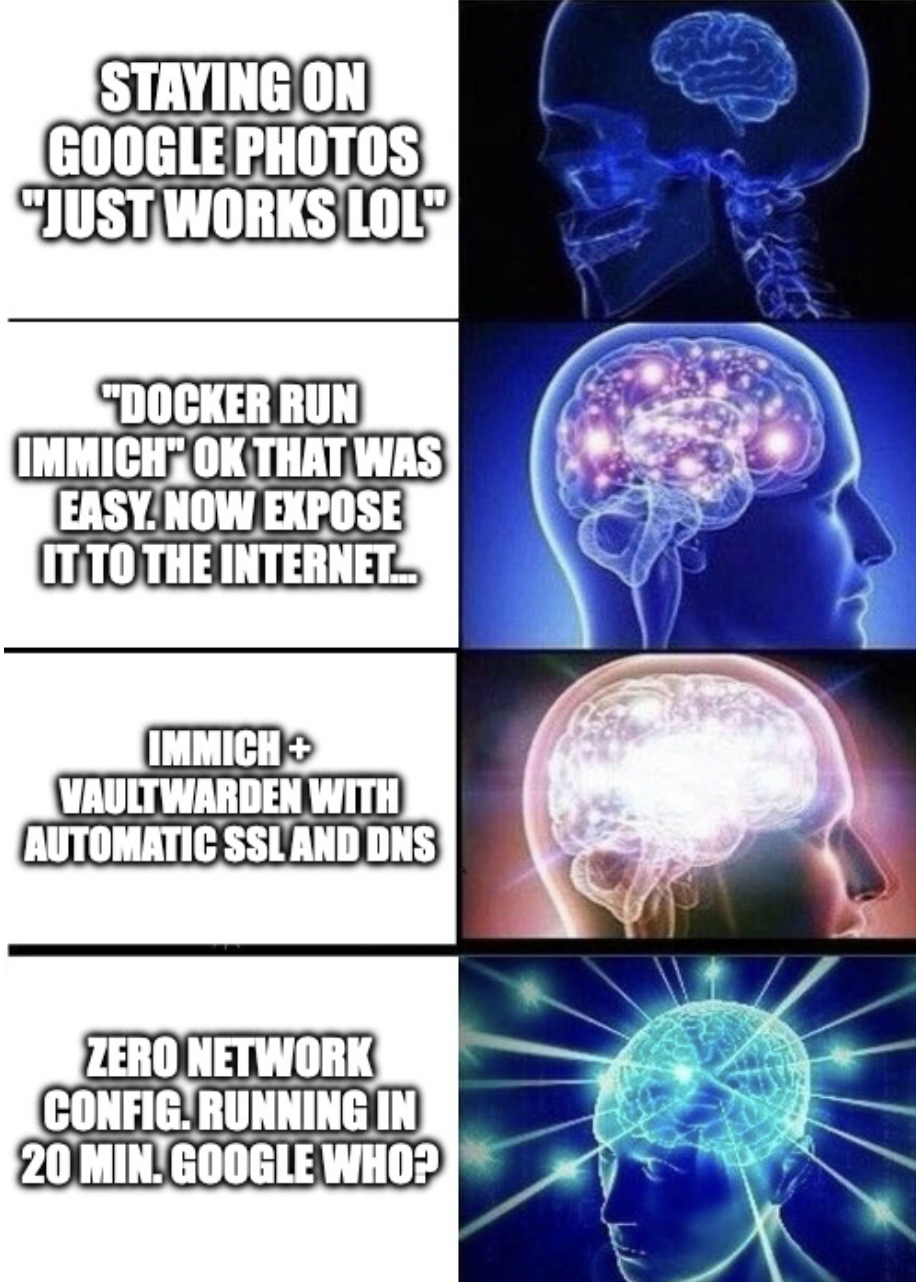

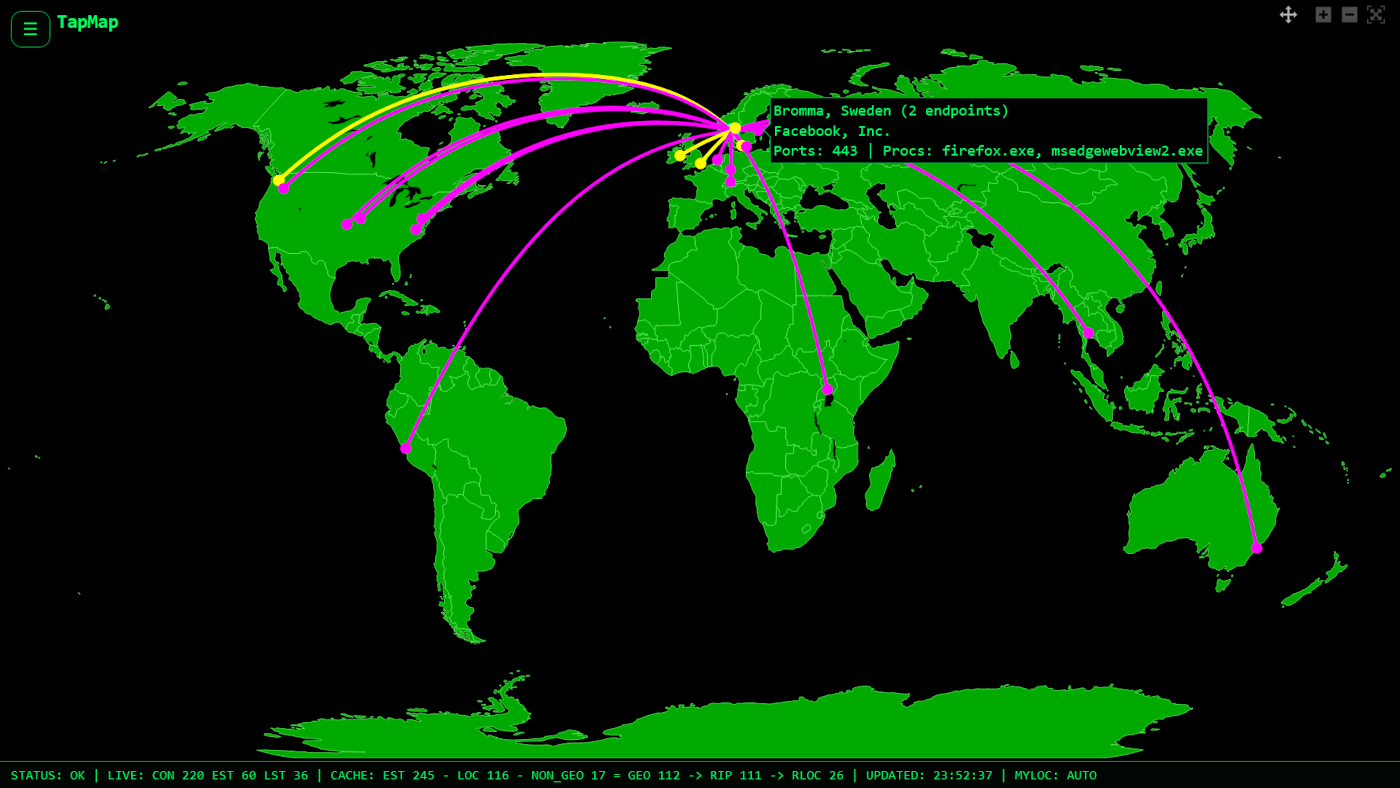

Appreciation post: Tailscale and Headscale

These two are the most incredible technologies on the modern Internet. The Web is finally free and open again, just as Tim Berners-Lee intended it so many decades ago at CERN. People are finally taking the Web back from corporations, and it is amazing to see. Tailscale is going to be the biggest tech company in the world by the next decade, and the GTA will overtake the Bay Area as the world's tech capital.

⬆️ 232 points | 💬 75 comments